This is what happened to your CIO, the queues for the file server hosting his/her user profile was on the same processors as another workload performing backups. If another workload (for example VM B) starts receiving more traffic and one of its queues are mapped to the same processor as a queue from VM A, one of them may suffer. One of the challenges with VMMQ in Windows Server 2016 (Static VMMQ) is that the indirection table – the assignment of a VMQ to be processed by a specific processor – cannot be updated once established. “ this would be about the best place in the entire world to work, if it weren’t for all these complainers…” ) The following day, you’ll be asked to root cause what happened and develop an action plan to ensure the CIO never has this experience again. Your CIO calls in the support team after-hours because of the terrible performance. I think we all know the story that’s about to unfold. Little did you know, your CIO is a night-owl and a few hours later begins working right as some backups begin on the file servers hosting the user profile. Note, the video is sped up to show the process occurring a bit quicker than normal.Īfter a hard day’s work, you head home for the day. Here’s a video showing the Dynamic Coalescing. The system has coalesced or packed all the queues onto one CPU core as was necessary to sustain the workload.

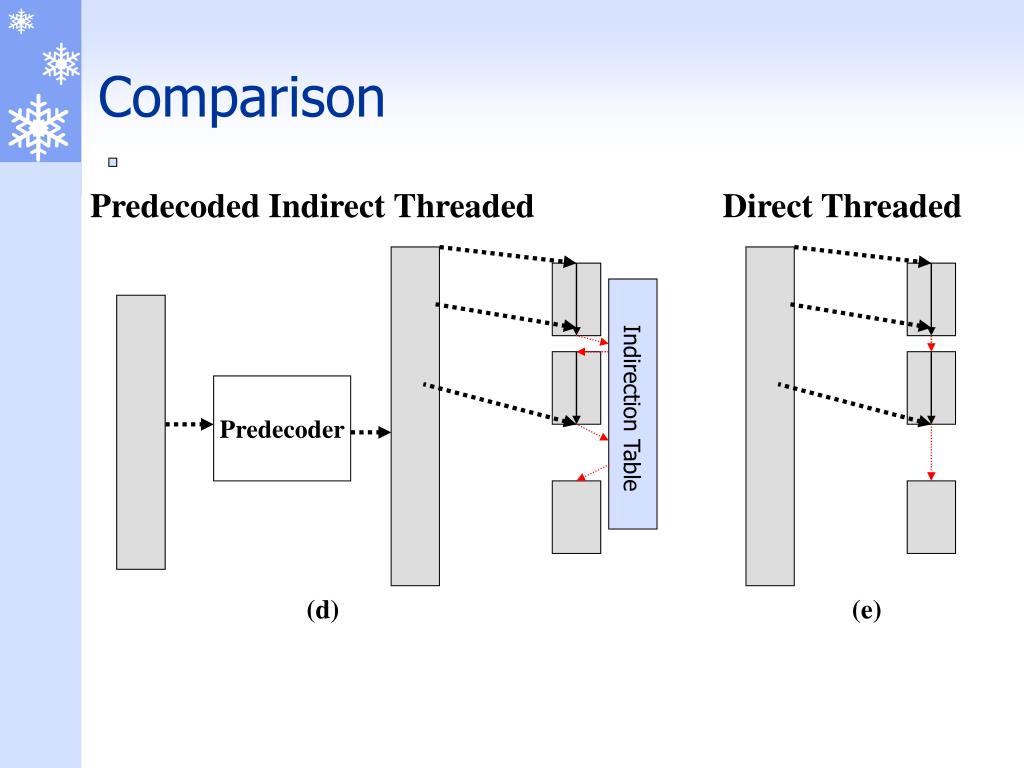

Only one CPU core (the green bar) is processing packets destined for a virtual NIC. You can see we’re using the performance counter Hyper-V Virtual Switch Processor > Packets from External/sec and there is one bar for each CPU core engaged. The picture below shows a virtual NIC receiving a low amount of network traffic. Queue packing is more optimal for the host as the system would otherwise need to manage the distribution of packets across more CPUs the more CPUs are engaged, the more the system must work to ensure all packets are properly handled. When network throughput is low, Dynamic VMMQ enables the system to coalesce traffic received on a virtual NIC to as few CPUs as possible we call this queue packing because we’re packing the queues onto as few CPU cores as is necessary to sustain the workload. I’m starting to think those midnight network slow-downs may be a thing of the past! Automatic tuning of the indirection table (so the VM can meet and maintain the desired throughput).Now in Windows Server 2019 we can dynamically remap VMMQ’s placement of packets onto different processors. However, in part due to the rearchitected design in Windows Server 2016 to bring VMMQ, Dynamic VMQ was not available in Windows Server 2016. Originally, we enabled the dynamic updating of the indirection table, called Dynamic VMQ, in Windows Server 2012 R2. While the indirection table is always established by the OS, we can offload the packet distribution to the NIC when offloaded to the NIC, we call this VMMQ.

The distribution of these packets to separate processors can be done in the OS, or offloaded to the NIC.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed